Published 2026-02-05

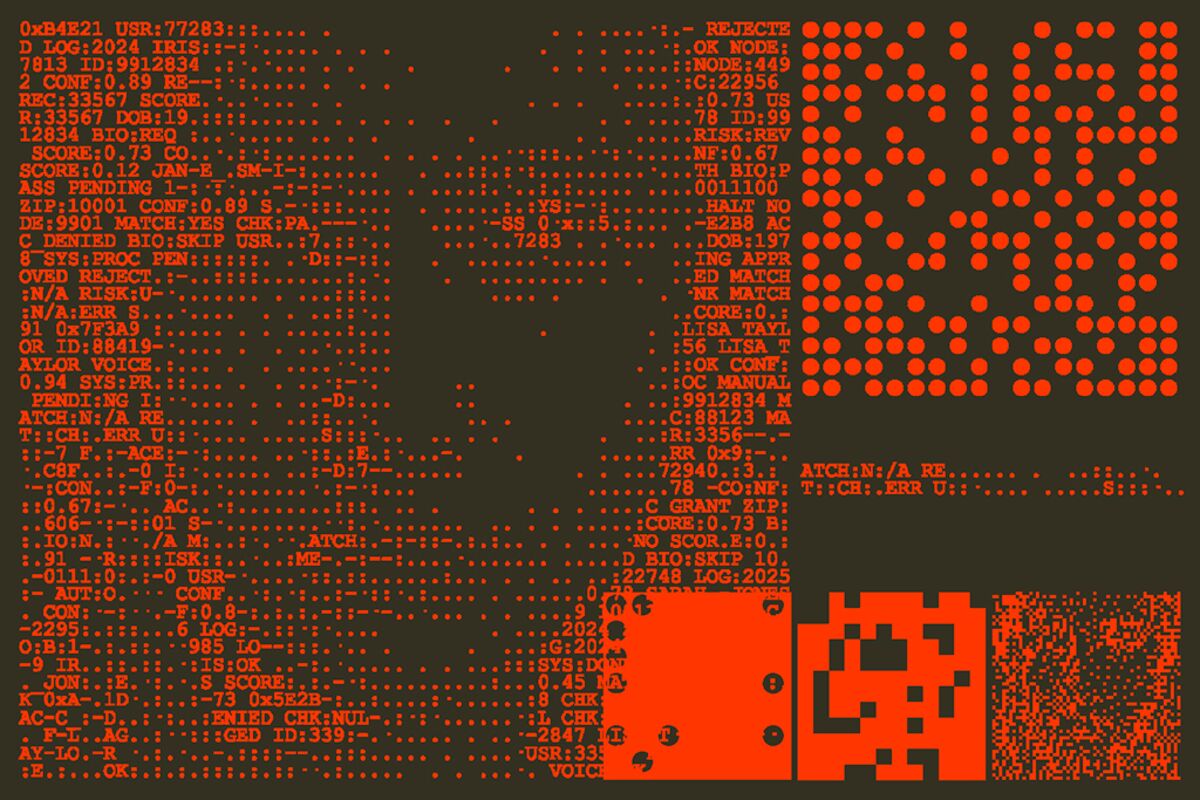

Summary: Recent studies indicate that Chinese AI systems may exhibit bias and discrimination based on user identity and political activity, raising concerns about fairness and ethics in AI deployment.

What We Know

- Chinese AI systems are producing different results depending on an individual’s identity and political activity.

- This variation suggests the presence of bias or discrimination embedded within these AI models.

- The findings are based on user data analysis, though specific methodologies and scope are not detailed.

- There is no confirmation of whether these biases are intentional or a result of training data issues.

- No official statements or responses from AI developers or authorities have been provided at this time.

What’s Still Unclear

- Exact nature and extent of the bias or discrimination in Chinese AI systems.

- Specific types of user data or identity markers involved in the bias.

- Potential impacts on users or broader societal implications.

- Whether measures are being taken to address or mitigate these biases.

- Details about the study or analysis methodology used to uncover these findings.

Context

Artificial intelligence systems are increasingly integrated into various aspects of daily life and governance worldwide. Ensuring fairness and avoiding discrimination in AI outputs is a critical concern across the industry, especially in regions with complex political and social landscapes.

Why It Matters

Bias and discrimination in AI can lead to unfair treatment of individuals based on identity or political activity, potentially impacting privacy, rights, and social cohesion. Recognizing and addressing these issues is essential for ethical AI development and deployment.

What to Watch Next

- Monitoring official responses or policy changes related to AI bias in China.

- Further research or independent audits to verify and understand the scope of bias in these systems.

- Development of guidelines or standards to prevent discrimination in AI applications.

- Potential technological solutions aimed at reducing bias in machine learning models.

FAQ

Q: Are these biases intentional?

A: It is not confirmed whether the biases are intentional or accidental, as details are limited.

Q: Will this affect AI development policies in China?

A: It is unclear at this stage; further official statements are needed to understand policy implications.

Related coverage

- Chinese AI Bias Detected: Systems Show Bias Based on User

- Germany’s Federal Cartel Office hits Amazon pricing

- AI driven market selloff fuels biggest stock and credit

Source Transparency

- This post is based on the RAW_CONTEXT brief and may not include full details.

- No direct source link was provided.

- Details may change as more reporting or official statements emerge.

Original brief: Chinese AI systems are producing different results based on someone’s identity and political activity…

[…] Chinese AI Bias Discrimination Revealed in User Data Study (2026-02-05) […]